School-based internet access boosted test scores in Peru, but these effects took time to materialise

Technology has become increasingly prevalent in schools worldwide (World Bank 2018, Escueta et al. 2017, One Laptop per Child 2016, UNESCO 2012, Trucano 2016, International Telecommunication Union 2014). The promise of boosting modern-day digital competencies, promoting interactive student-centred teaching models, and providing up-to-date learning materials even in remote areas has encouraged low- and-middle-income countries (LMICs) to invest considerably in Information and Communication Technologies (ICTs) in schools.

Among ICTs, the internet may have particularly important pedagogical uses in LMICs. Internet access can provide underserved students with otherwise unavailable sources of information (Levin and Arafeh 2002). Similarly, the internet can expand teachers’ access to references and teaching aids as well as their ability to share information among peers (Jackson and Makarin 2018, Purcell et al. 2013). However, as with many new technologies, benefits materialise only after a period of learning and adaptation, demonstrating the importance of understanding the dynamic effects of ICT interventions over time.

Using extended evaluation windows to capture the medium-run effects of ICTs

Few studies have rigorously evaluated the impact of the internet on student performance in LMICs. Though previous research in high-income countries has led to ambivalent conclusions on the effectiveness of home-based internet access as a learning input (Belo et al. 2014, Faber et al. 2015, Gibson and Oberg 2004, Goolsbee and Guryan 2006, Machin et al. 2007, Vigdor et al. 2014), school-based connectivity can be potentially more important in LMICs due to lower levels of teacher skills, larger class sizes, and limited access to other conventional inputs.

Moreover, most prior studies of ICTs have been based on short-term evaluation windows and are only designed to detect somewhat immediate treatment effects. Such studies may overlook longer-term impacts that follow from an initial learning period, during which teachers, students, and administrators adapt to new technology. Hence, detecting gains in learning that could arise over such a learning period requires a longer evaluation window.

In a recent paper (Lakdawala et al. 2022), we examine the impact of internet access on the performance of students who attended public primary schools in Peru that initially acquired internet between 2007 and 2020, or that remained unconnected by 2020. Over this period, more than 11,300 schools (which jointly enrol about two million students per year) gained access to the internet, largely through a national program – Plan Huascarán – which prioritised providing internet connections to public schools.

We link administrative data on school-based access to internet with students’ mathematics and reading scores from a large-scale national test that covers almost all second-graders in public schools in Peru between 2007 and 2016. We construct a panel dataset of around 23,300 schools, where we observe the scores of about 2.3 million second-grade students and use this dataset to study how test scores evolve before and after schools gain internet connections. Our strategy relies on comparisons of cohorts that attended the same school, but in the years before and after that school was connected to the internet.

Internet connections at school lead to higher test scores for subsequent cohorts of students, as schools and teachers adapt to new technology

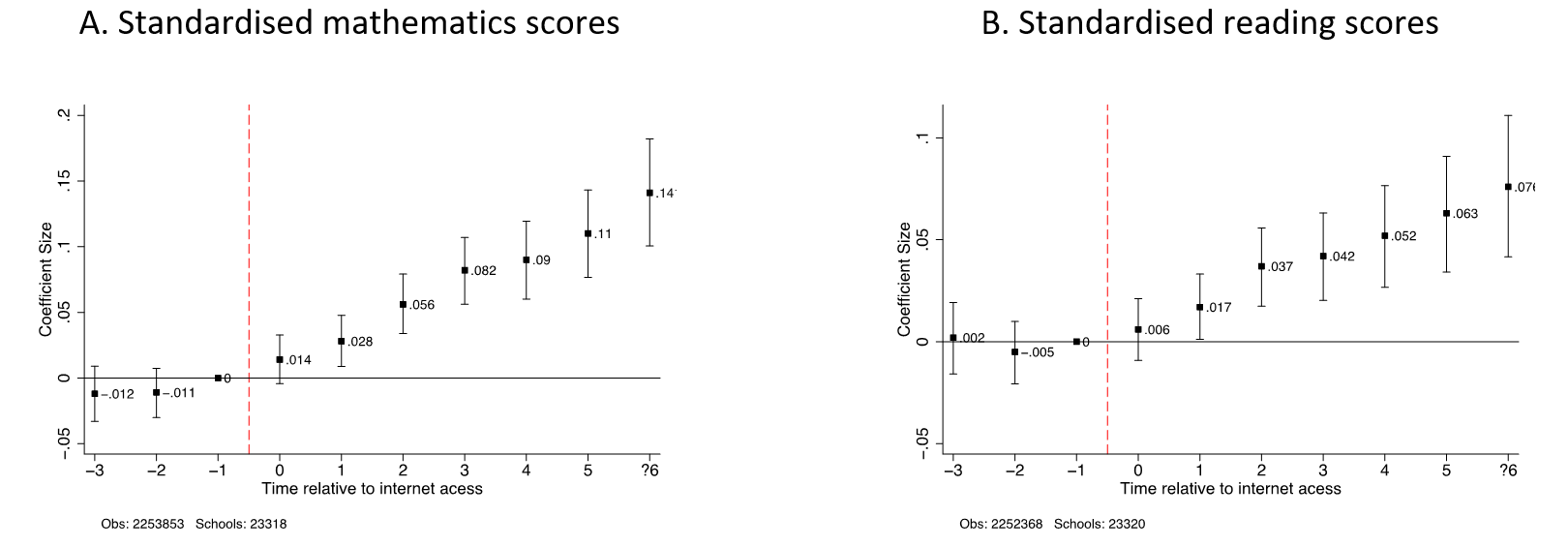

We find that internet access leads to modest test score improvements of 0.028 standard deviations in mathematics and 0.017 standard deviations in reading in the first year after installation (Figure 1). In other words, cohorts that attended a school after it has been connected to the internet for about one year score 0.028 standard deviations higher on mathematics tests, relative to cohorts who attended the same school in the previous year. This means that schools move up in the distribution of average test scores after they receive internet installations1.

Importantly, though this effect is small in the first year, it grows significantly over time, reaching 0.11 and 0.063 standard deviations five periods after installation for mathematics and reading respectively. That is, a second-grade student in a school that has had internet for five years performs 0.11 standard deviations better on mathematics tests than a second-grade student at the same school before internet has been installed. The trajectory of estimated effects implies that, over time, schools become more efficient at using the internet to improve students’ test scores.

Figure 1: Impact of internet access on test scores

Notes: The above figures plot the coefficients and 95% confidence intervals from estimating a regression of test scores (mathematics and reading) on indicator variables of the timing of internet access, school fixed effects, year × terciles of baseline school enrollment, and a set of time-varying school controls. Scores are standardised within each calendar year to have mean zero and standard deviation of one across the universe of test takers in public schools. Coefficients capture the increase in test scores relative to the year before internet installation (t = −1). The sample includes all grade 2 students in all public schools that gain internet between 2007 and 2020 or remain unconnected by 2020. Standard errors are clustered at the school level. For more details, please see Lakdawala et al. (2022).

We interpret this growth in impacts over time as reflecting an adaptation period, during which schools must learn to integrate new technologies. Schools respond to internet access by hiring teachers with formal training in digital skills, and this process builds only gradually. Schools are 27% more likely to have a computer-trained teacher by the fifth year after installation relative to before internet access. That the gradual growth over time in test scores shadows growth in the staffing of computer-trained teachers suggests that complementary investment in staff computer proficiency is needed to fully exploit internet-enabled classroom capabilities. This finding aligns with previous literature examining the impacts of general-purpose technologies and the complementary investments and organisational changes that ultimately drive long-run productivity gains (e.g. Brynjolfsson and Hitt 2000).

Internet connections are important for both students and teachers

There appear to be several additional channels through which internet access improves test scores. First, using the Peruvian National Household Survey, we show that internet access at schools leads to meaningful increases in student use of the internet. Six or more years after gaining connections at their schools, public primary school students report an increase in internet usage of 122% relative to the period before their local schools become connected. This suggests that increased use of internet by students partially explains the improvements in student performance.

Second, we present descriptive evidence of teachers’ use of internet as a pedagogical tool using two nationally representative teacher surveys. Teachers report that internet is one of the most important materials enhancing teaching. Moreover, teachers at schools with good quality internet connections also report less difficulty performing teaching activities than those without internet connections at school. Taken together, these findings indicate that access to online materials may boost student performance above and beyond the impacts of direct student use of internet-connected computers.

Measuring outcomes in the medium-run (instead of the short-run) matters

The size and time span of our data present opportunities to complement and contextualise existing studies of ICTs as schooling inputs. On the one hand, previous research on ICTs has found that providing ICT hardware with few or no complementary learning tools has little or no immediate impact on student test performance (Bet et al. 2014, Barrera-Osorio and Linden 2009, Cristia et al. 2017). Our short-run results (based on up to one year after internet installation) confirm that any effects of school-based internet access are small in magnitude—and thus perhaps impossible to detect in smaller samples of schools that are typical of randomised control trial (RCT) evaluations of schooling ICTs. On the other hand, medium-run gains are sizable, pointing towards the necessity of a longer evaluation window for understanding the effectiveness of ICT interventions.

Providing internet access in schools appears comparable to other interventions in terms of cost effectiveness. We find that the yearly cost per student of raising test scores by 0.01 standard deviations ranges between US$ 0.60 and $5.20, depending on the test subject and the assumptions about the cost of internet installation. This range makes school-based internet neither the most, nor least cost-effective within a wide variety of educational interventions in a number of different settings. With future potential technological advances leading to reductions in the cost of internet provision, the cost-effectiveness of this policy could increase.

References

Barrera-Osorio, F, and L Linden (2009), “The Use and Misuse of Computers in Education: Evidence from a Randomized Experiment in Colombia”, The World Bank Policy Research Working Paper 4836.

Belo, R, P Ferreira, and R Telang (2014), “Broadband in School: Impact on Student Performance”, Management Science, 60(2): 265–282.

Bet, G, P Ibarraran, and J Cristia (2014), “The Effects of Shared School Technology Access on Students’ Digital Skills in Peru”, Inter-American Development Bank Working Paper Series 476.

Brynjolfsson, E, and L M Hitt (2000), “Beyond Computation: Information Technology, Organizational Transformation and Business Performance”, Journal of Economic Perspectives, 14(4): 23-48.

Cristia, J, S Cueto, P Ibarraran, A Santiago, and E Severin (2017), “Technology and Child Development: Evidence from the One Laptop per Child Program”, American Economic Journal: Applied Economics, 9(3): 295–320.

Escueta, M, V Quan, AJ Nickow, and P Oreopoulos (2017), “Education Technology: An Evidence-based Review”, NBER Working Paper 23744.

Faber, B, R Sanchis-Guarner, and F Weinhardt (2015), “ICT and Education: Evidence from Student Home Addresses”, NBER Working Paper 21306.

Gibson, S and D Oberg (2004), “Visions and Realities of Internet Use in Schools: Canadian Perspectives”, British Journal of Educational Technology, 35(5): 569–585.

Goolsbee, A and J Guryan (2006), “The Impact of Internet Subsidies in Public Schools”, The Review of Economics and Statistics, 88(2): 336–347.

International Telecommunication Union (2014), “Final WSIS Target Review: Achievements, Challenges and the Way Forward”, Partnership on Measuring ICT for Development, Geneva, Switzerland.

Jackson, K and A Makarin (2018), “Can Online off-the-Shelf Lessons Improve Student Outcomes? Evidence from a Field Experiment”, American Economic Journal: Economic Policy, 10(3): 226–54.

Lakdawala, L, E Nakasone, and K Kho (2022), “Dynamic Impacts of School-based Internet Access on Student Learning: Evidence from Peruvian Public Primary Schools”, American Economic Journal: Economic Policy, forthcoming.

Levin, D and S Arafeh (2002), “The Digital Disconnect: The Widening Gap between Internet-Savvy Students and their Schools”, American Institutes for Research: Washington, DC. Report prepared for the Pew Internet and American Life Project.

Machin, S, S McNally, and O Silva (2007), “New Technology in Schools: Is there a Payoff?”, The Economic Journal, 117(522): 1145–1167.

One Laptop per Child (2011), “Internet Connectivity: The Achilles Heel of the Peru Deployment”, olpcnews.com, January 28.

Purcell, K, A Heaps, J Buchanan, and L Friedrich (2013), “How Teachers are using Technology at Home and in their Classrooms”, PEW Research Center, Washington DC.

Trucano, M (2012), “Evaluating One Laptop Per Child (OLPC) in Peru”, blogs.worldbank.org, March 23.

Vigdor, J L, H F Ladd, and E Martinez (2014), “Scaling the Digital Divide: Home Computer Technology and Student Achievement”, Economic Inquiry, 52(3): 1103–1119.

World Bank (2018), “World Development Report 2018: Learning to Realize Education’s Promise”, The World Bank, Washington, DC: The World Bank.

Footnotes

1 We follow the convention in the education literature and report our effect sizes in standard deviations. The estimates express the size of the effect relative to the control group’s distribution of scores. If our outcome is normally distributed, an effect size of 0.1 standard deviations means that the treated group’s average test score is at the 54th percentile of the control group’s distribution. Expressing treatment effects in this way allows us to better understand the magnitude of an effect in outcomes where the units of measurement are not intuitive or standardized – for example, across tests that use different scales. It also enables us to compare our results with results from other studies.